AIML: Cut Through the Noise

Machine learning and artificial intelligence are a leap forward for life and annuity actuarial modeling

Spring 2020Photo: iStock.com/creativaimages

Artificial intelligence (AI) and machine learning (ML)—collectively referred to as AIML—have been hot topics lately. And for good reason: These technologies are bringing significant advances that are reshaping the world as we know it. From driverless cars to detecting cancer, AIML already has presented tremendous breakthrough opportunities to myriad industries, and the adoption and expansion of business applications are only expected to accelerate. In the financial services sector, FinTech firms have developed concepts leveraging AIML such as chatbots, automated document processing and deep hedging (hedging strategy informed by ML algorithms). Banking and insurance companies increasingly are injecting funds into these efforts.

Actuaries are taking notice. The Society of Actuaries (SOA) made predictive analytics a component of its strategy,1 extended the candidate’s curriculum and launched a related Actuarial Innovation & Technology Strategic Research2 initiative. Andrew D. Rallis, FSA, MAAA, president of the SOA, established AIML as an area of focus in his presidential luncheon speech at the 2019 SOA Annual Meeting & Exhibit.3

AIML likely will disrupt our work like other technologies have, with an ever-increasing pace of change. Just as we can’t fathom what our work would have looked like without technologies we now take for granted—such as distributed processing, first principles models or even basic data storage capabilities—AIML soon will be a common component of the actuarial toolkit.

With all of that said, it can be hard to cut through the noise surrounding AIML and get a clear understanding of the concrete applications this emerging technology can have for the life and annuity insurance industry. While keeping up with the latest disruptions brought by AIML is important, given its transformative impact on the insurance industry, our objective with this article is to share some concrete and present-day applications in actuarial modeling from which actuaries can harness the power of AIML.

Overview of AIML Techniques

AI can be thought of as the combination of various branches—ML, natural language processing, speech and robotics—which together can simulate intelligent behavior. Using a set of techniques that often overlaps with predictive analytics, ML algorithms take inputs provided by AI and return outputs simulating the AI “thinking” behavior. ML techniques have risen in prominence and effectiveness in today’s world because of the depth and breadth of readily available data—a key ingredient in training and informing ML algorithms.

Various types of algorithms commonly employed in AIML are relevant for actuarial modeling. This article explores practical examples that leverage specific classes, such as artificial neural networks (ANNs), generalized linear models (GLMs) and k-means clustering, to provide a sense of how those algorithms work and can be put into practice. Readers should note that many other types of AIML algorithms exist (and still others have yet to be invented). Some examples include decision trees, random forests, gradient boosting machines (GBMs) and support vector machines. Actuaries interested in exploring these techniques can benefit from an extensive AIML community where documentation, research and open-source libraries are available.

While most AIML algorithms can be processed without extensive computing power, the training aspect can be demanding. This is especially true when the application requires the use of deep learning, which is a subset of AIML that uses neural networks with multiple layers that can reach higher levels of performance given enough data. Often, actuarial applications of AIML require the use of deep learning to attain the level of precision sought by actuaries.

Companies that want to successfully use this technology need to develop expertise and capabilities to effectively identify and design potential algorithms for new use cases, establish a conducive environment, and identify risks and controls. Further, while it is common for AIML algorithms to lack transparency, various methodologies are available to explain AIML.4

Augmenting Actuarial Models With ML

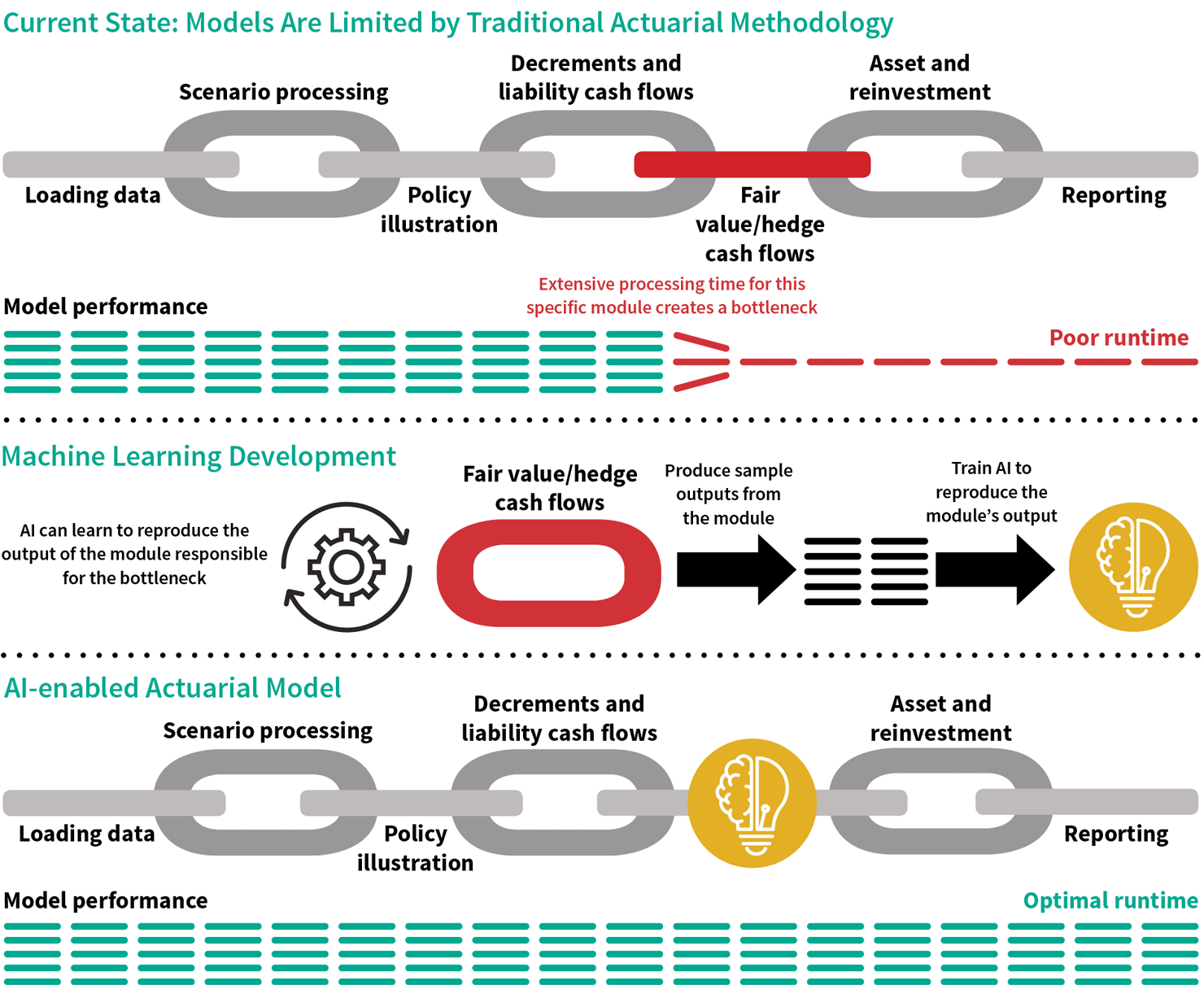

A key opportunity is embedding AIML in our models. While this can appear counterintuitive, as some may think of AIML as an alternative methodology, AIML can significantly boost the speed and accuracy of our models by replacing specific components. The premise is AIML can reproduce a specific actuarial calculation faster than an actuarial model would when processing it under first principles. See Figure 1 for an example.

Figure 1: Simplified Illustration of the Calculation Components of a Variable Annuity Projection Model

Before we can explain how this works, first think of an actuarial model as being a collection of various calculation components, or modules. Here a module could be an account value roll-forward or a nested calculation. A significant amount of calculations typically takes place when processing an actuarial model. Actuaries are used to facing long model runtimes and needing to resort to approximations.

Actuaries interested in implementing AIML in actuarial models should first identify what module(s) is/are responsible for the undesirable runtime. Once the module is identified, the AIML algorithm can be trained to accurately predict the module’s output. A key advantage of this application is the control of methodology and data volume. Further, developing the AIML provides an opportunity for greater understanding of the specific calculations targeted.

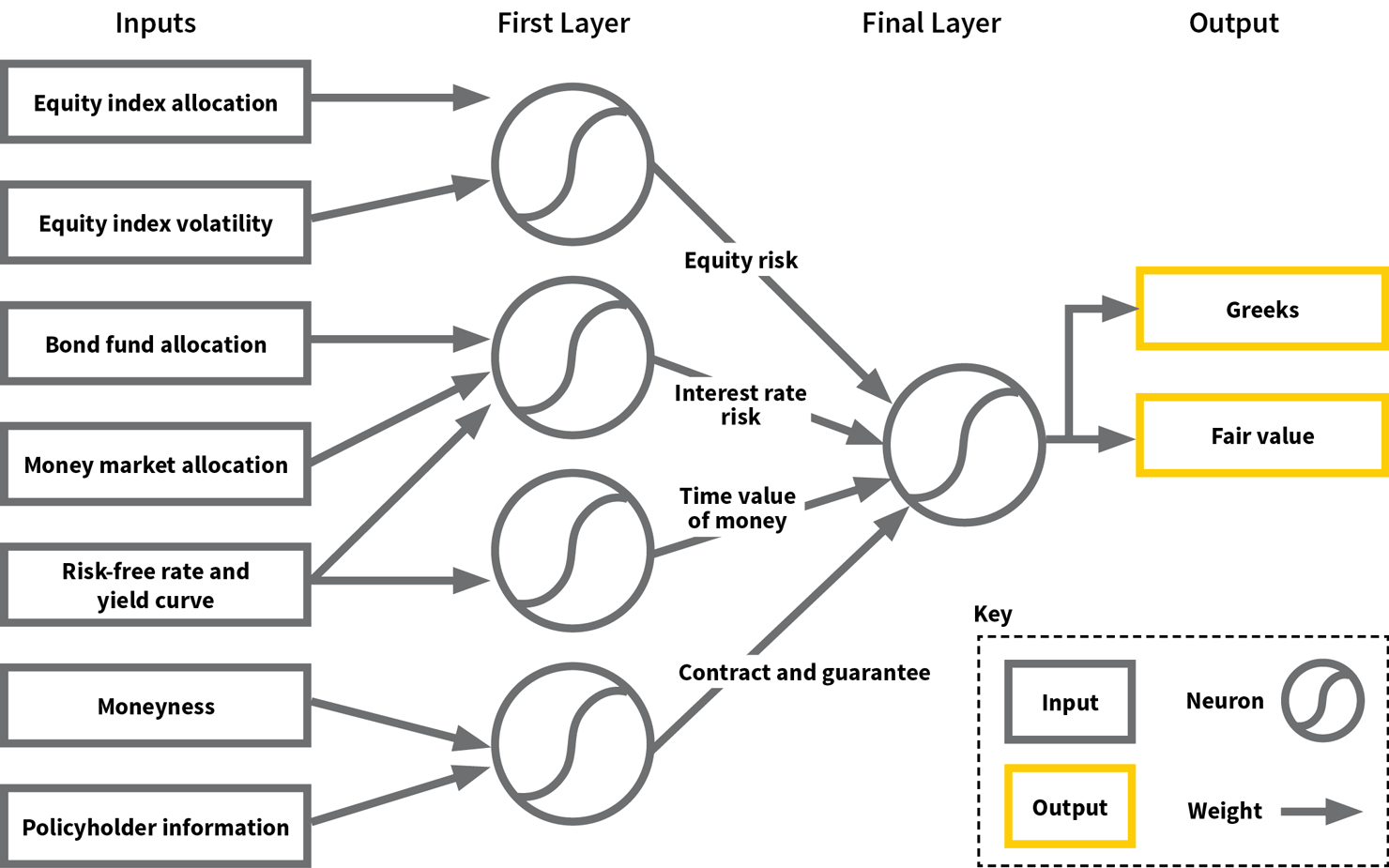

As a practical example, we can train a neural network to proxy variable annuity (VA) fair value (FV) and Greeks for hedge cash flow projections. This neural network can take inputs such as the VA FV module within the actuarial model (e.g., policyholder fund allocation, market variables) and other relevant measures (e.g., moneyness). Using common training techniques, the neural network will draw relationships among those variables as it learns from the data provided.

A conceptual illustration of such a neural network can be seen in Figure 2. This illustrative network first takes the inputs provided and infers information about the equity risk, interest rate risk, time value of money and contract-specific contributions in the first layer of artificial neurons—mathematical functions conceived as a model of biological neurons. Each neuron first weighs the information from the inputs and applies a predefined activation function. Then, this network processes the information inferred from the first layer and transforms it again to provide an estimate of the FV and Greeks.

Figure 2: Illustrative Neural Network

With VA statutory reform and the changes to generally accepted accounting principles (GAAP) in the United States, many companies are looking for capabilities to project FV and their hedge strategy to gain capital credits and a better understanding of their GAAP balance sheet. This has been a challenging task in our experience, and we have found that neural networks and other algorithms such as GBMs are able to provide reliable proxies.

Potential use cases for this application include many areas where our actuarial models must perform nested calculations. This includes not only FV and Greeks, but also projecting reserves, setting the parameters of exotic crediting strategies and many others.

Policyholder Behavior and Other Actuarial Assumptions

Actuarial assumptions are a critical component of our actuarial results and forecasts: They establish our view about how key insurance risks such as mortality, morbidity and policyholder behavior will unfold in the future. Generally, actuaries start by establishing a hypothesis as to the drivers of the risk, develop assumptions by leveraging data and other sources, and monitor actuals to expected. While traditional actuarial methods used in developing those assumptions certainly remain valid, actuaries responsible for developing assumptions may gain further insights by applying techniques rooted in predictive analytics and ML.

For example, if we take the behavior of variable and fixed indexed annuity policyholders, actuaries typically start by looking at qualitatively intuitive dimensions such as policy year duration and rider moneyness, among other items. By using predictive analytics methodologies, actuaries can quickly evaluate the relevance of a great number of variables and identify patterns and relationships that might have otherwise gone unnoticed. This is in addition to the natural objective of reducing the actuals to expected across the identified policyholder attribute dimensions.

Actuaries can use ML algorithms and other methodologies such as principal component analysis to learn how policyholders behaved in the past (based on historical data) and infer which variables best predict how policyholders will utilize their contract options. While actuaries would have a good sense for the key drivers, this process can provide additional insights. In some cases, actuaries could even use the algorithm as an actuarial assumption.

Actuaries using these techniques should apply judgment when formalizing relationships and differentiate between correlation and true cause-and-effect. Actuaries who decide to use AIML algorithms should be careful to select an approach that is fully interpretable and avoid the temptation to strictly minimize actuals to expected. In particular, we have found GLMs to be a popular option, given they provide greater transparency relative to other methods.

Compressing Model Points

It is common for actuaries to seek to reduce the number of model points (e.g., in-force policy records or new business cells) to be processed by an actuarial model for certain use cases because of runtime limitations. The actuary may be seeking to process a large scenario set or project complex nested stochastic reserves. Attempting to process all model points in those situations could very well be a time-consuming, or even impossible, task.

Currently, a conventional approach consists of selecting representative policies by creating predefined segments across the data, based on the actuary’s judgment. For instance, the actuary evaluates which features of the model points are most important and then defines subsections across the range of potential values that these representative characteristics provide. This could cover five-year increments in attained age, gender, moneyness and so on.

Alternatively, actuaries may explore the use of clustering techniques such as k-means clustering to drive the selection of representative policies. Actuaries who use these techniques can base their selection of representative policies off of the sets of characteristics that effectively group the in force in policies that behave in a similar fashion. Using these techniques, actuaries likely will find they can significantly reduce the number of clusters with minimum loss of precision.

Other Applications

There are various other applications where the use of predictive analytics or ML can be considered for actuaries in the life and annuity insurance industry.

Process and Controls

ML can be used to implement new checks and controls. This can be particularly useful for production processes such as quarterly actuarial valuation, allowing actuaries to identify errors earlier or that would otherwise have been missed. For instance, ML could be trained to identify suspicious policies within the in force before starting actuarial calculations. Similarly, actuaries could train ML programs to identify incorrect results using previous valuation quarters.

Actuarial System Conversions

As most modeling actuaries know, migrating actuarial applications from a legacy system to a new platform can be a daunting task. Often, most time and efforts are spent identifying and reconciling records between the two platforms. Techniques based on data science, such as principal component analysis, can help actuaries identify differences faster and accelerate the conversion.

Replacing Actuarial Model Runs

In a similar fashion to the actuarial model augmentation previously described, actuaries also could train ML algorithms to predict specific results from actuarial model runs. This could include specific metrics, reserves or setting rates for insurance products, allowing actuaries to get insights faster for any application where frequent and/or manual model runs are needed.

Financial Planning & Analysis (FP&A) and Asset/Liability Management (ALM)

Lastly, ML can be used to accelerate and streamline the process to generate forecasts for management as well as enhance the ALM and/or hedging functions.

Limitations and Key Risks Involved

While we believe AIML and predictive analytics can bring significant value to the actuarial function, there are important implications that adopters should keep in mind when using this technology. Some examples are:

- Interpretability. A critical aspect adopters need to keep in mind is the lack of interpretability associated with most ML models. While neural networks, GBMs and random forests—when trained properly—can reproduce a data set with a high degree of fidelity, users cannot easily trace the calculations leading to the prediction. While various tools and methodologies can be used to interpret these models, they do not provide as much transparency as actuaries are used to.

- Overfitting. Another critical aspect is the risk of overfitting. If not monitored, models can learn irrelevant details or reproduce noise in the data. This is a key risk for most applications of ML outside of actuarial work and for the actuarial assumption use case highlighted in this article. Various methodologies exist to avoid or minimize overfitting.

- Labeled data. Lastly, ML requires large volumes of data, and actuaries may not have enough data on hand to adequately train the applicable algorithms. For applications leveraging policyholder or other “real” data, actuaries may seek to supplement their company database with external sources. For the applications where we aim to reproduce specific actuarial calculations, users may run into challenges to produce a sufficient data set from the actuarial models, or they may need to develop new automation procedures.

Putting It All Together

There is a wide range of potential applications for the modeling actuary looking to leverage AIML and predictive analytics. These techniques can provide efficient solutions to complex, nonlinear functions and classification problems common in modeling-related applications.

From accelerating model calculations, developing actuarial assumptions and compressing model points, to providing enhanced controls, accelerating actuarial system conversions and substituting repetitive model runs, AIML has the potential to revolutionize our actuarial capabilities.

The views expressed in this article are solely the views of Dave Czernicki, Peter Carlson, Jean-Philippe Larochelle and Jonathan DeGange and do not necessarily represent the views of Ernst & Young LLP or other members of the global EY organization. The information presented has not been verified for accuracy or completeness by Ernst & Young LLP and should not be construed as legal, tax or accounting advice. Readers should seek the advice of their own professional advisers when evaluating the information.

References:

- 1. Society of Actuaries. Strategic Plan. Society of Actuaries (accessed January 26, 2020). ↩

- 2. Society of Actuaries. Actuarial Innovation & Technology Strategic Research. Society of Actuaries (accessed January 26, 2020). ↩

- 3. Society of Actuaries. Presidential Luncheon Speech, 2019 SOA Annual Meeting & Exhibit. Society of Actuaries (accessed January 26, 2020). ↩

- 4. Murdoch, W. James, Chandan Singh, Karl Kumbier, Reza Abbasi-Asl, and Bin Yu. 2019. Interpretable Machine Learning: Definitions, Methods and Applications. Proceedings of the National Academy of Sciences of the United States of America 116, no. 44:22,071–22,080. ↩

Copyright © 2020 by the Society of Actuaries, Chicago, Illinois.