Human–computer Interaction

The actuary’s next behavioral science toolkit

April/May 2019“64 6f 20 79 6f 75 20 75 6e 64 65 72 73 74 61 6e 64 3f.” Do you understand? Unless you are a computer with a hexadecimal translator, this series of alphanumeric characters should not mean much to you. However absurd this question may seem, there was a point in time when programmers had to create coding at this level of interaction with machines. In this article, we will explore how human–computer interaction (HCI) will and has transformed our industry through both the tools we use and the ways we communicate. We will utilize the studies of HCI and reference impacts of behavioral economics (BE) on our industry to demonstrate how these lessons influence our work.

Parallels Between BE and HCI

From a systematic perspective, behavioral economics can be viewed as a disconnect/entropy between data coming into a human constraint and the resulting decisions made. For example, choice overload is a BE concept by which eliminating the number of choices results in an individual having less difficulty in making a decision. From a purely efficient market view, this makes no sense, as optionality in a vacuum should only lead to better outcomes. As such, we have an issue with a human element needing to take in information and limitations of that human on effectively processing all the data.

Much like behavioral economics, the financial industry frequently utilizes HCI without thinking about the structured context. HCI can be viewed as a disconnect between the data a machine has aggregated/computed and the human being able to interpret and determine actionable insight. Due to this disconnect, we present graphs instead of spreadsheets full of numbers—just as the human benefits from fewer choices in a BE world, the human benefits from a less detailed, graphical illustration in the HCI world. Appreciating how internal technology has progressed to cater to HCI and these resulting disconnects may provide some insight on what the future holds.

Driving the Tools We Use

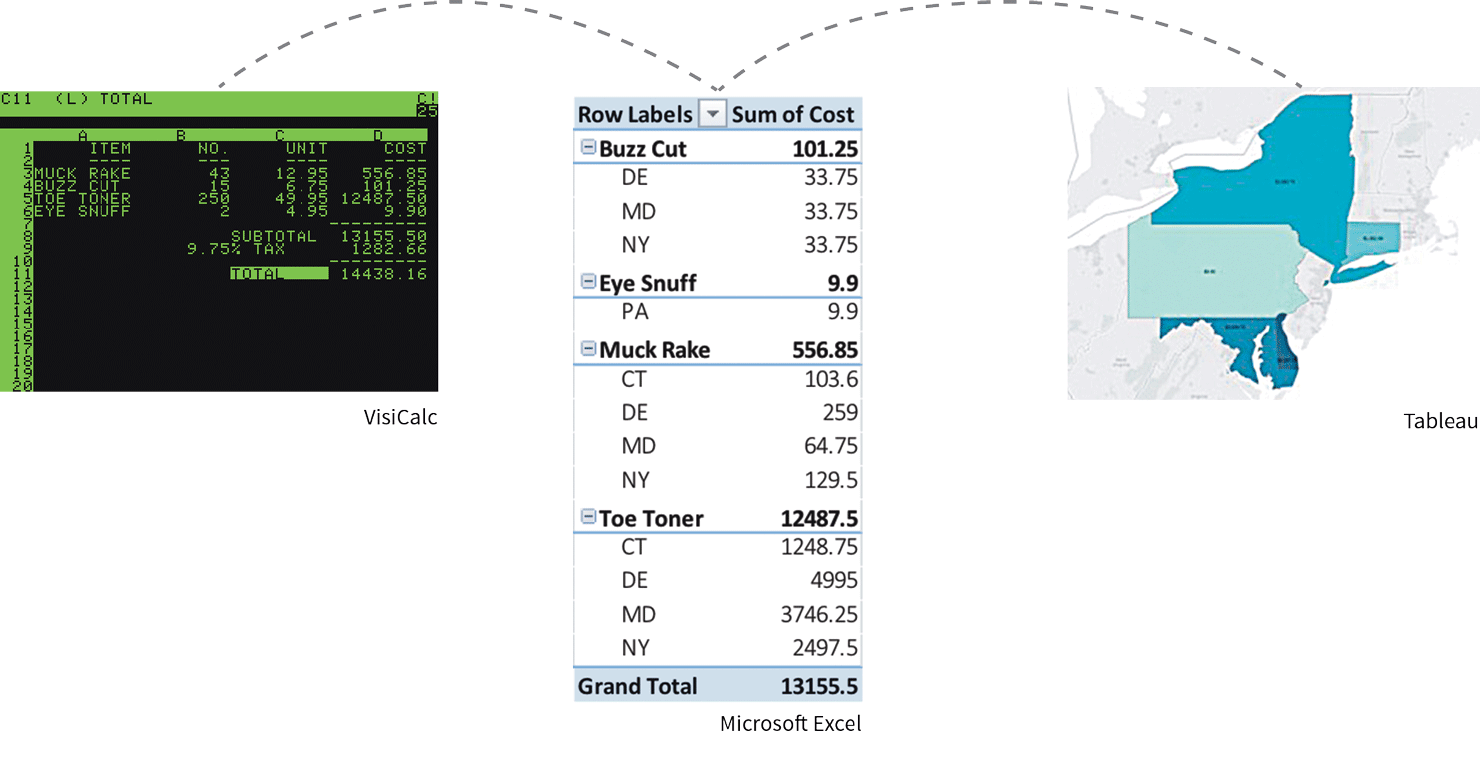

One of the most widely used tools in the actuarial profession is arguably the spreadsheet. VisiCalc, the original predecessor to our spreadsheet applications, was created, as the name suggests, to serve as a “visible calculator.” The display on VisiCalc showed multiple rows on the screen rather than just the current number in a calculation. This visual structure helped to remove the memory requirement for interacting with a calculator with only one row.

Cloud Play

While actuaries are familiar with SQL and Microsoft Excel, and can use these applications to prepare data for consumption, these applications are not without fault.

With the ever-increasing volume of data, desktop applications such as Excel and Access can quickly reach their limit. To overcome such constraints, cloud computing …

After VisiCalc, Microsoft Excel entered and dominated the market. Excel was better structured to depict and process spreadsheet information through the use of pivot tables, graphs and database connections. These additional functions were built from a spreadsheet base to help better communicate data with the end users.

While actuaries are familiar with the power behind pivot tables and the aggregation of data, pivot table presentation is still only textual, and it takes a significant amount of brainpower to process large sets. Tableau takes this visualization one step beyond and turns those rows and columns of a pivot table into “dimensions,” “measures” and “marks.” Dimensions are viewed as categorical data, while numbers are assigned to measures. Marks can be interpreted as more details that we want to visualize in the graphics, such as colors and size. By aligning data to these constraints, the human user can easily categorize the data and understand where each variable should be substituted into a graphical representation.

VisiCalc (left) was the original predecessor to spreadsheet applications. It was obsoleted by Microsoft Excel (center), which is now being complimented by Tableau (right) for its ability to take data visualization one step further.

This increased emphasis on the end communication, however, is not a panacea. One of the widely documented behavioral aspects of humanity is that we tend to be very good at finding patterns. In fact, we are so good at finding patterns we often find them when they don’t exist.1 Much like overfitting is possible in modeling, it would not be a stretch to state that these tools can be used to tell stories that are misleading to ourselves and others. Additionally, there are limitations on both the front (data preparation) and back (intelligent analytics) sides of the Tableau experience. During data cleaning and preparation, many Tableau users find themselves working in Excel and performing SQL queries just to get data into a usable format. On the back end, there are several analytic overlays available such as trends, forecasts and clustering, but this is far from an extensive list. Furthermore, it is not a seamless experience to incorporate R, Python or other more analytic-heavy technologies.

In the future, more solutions will exist to address these HCI issues around data collection and modularity of incorporating other technologies. There are already third-party data providers that collect, interpret and clean publicly available data. Cloudera, IBM, Microsoft and countless other data management companies are creating interfaces and tools for deeper analytics. Tableau also released a separate “Prep” software aimed at addressing some of these issues.

Driving Product Decisions

Humans experience more pain from losing a dollar compared to the joy experienced from gaining one. In behavioral economics, this is an example of loss aversion inherent in our thought processes. Companies have adapted to these preferences by developing new products and features. Managed volatility funds, for instance, can be seen as an attempt by variable annuity departments to address the concept of loss aversion. Generally, these funds sell some level of upside potential in exchange for a more level stream of returns.

Just as the insurance industry has adapted on the consumer front to consider behavioral economics, it has and will adjust to consider customer HCI. Insurance has had a reputation for being complex and needing to be “sold” to customers. But that begs the question: Should the sales of insurance to consumers be so complex? Is the concept of being paid by a property and casualty (P&C) company for a product warranty or by a life insurance company upon a death really more complicated than coordinating a driver to pick me up and take me to a random destination through a ride-sharing company like Uber? It heavily comes down to the human interaction and the presentation to the customer.

The Actuarial Connection

Human–computer interaction (HCI) is the study of how humans interact with technology. Insurance products and analytical tools have evolved—and will continue to evolve—to account for human strengths and weaknesses of interacting with technology and underlying data. From new visualization tools to new product designs and data collection methods, actuaries already are utilizing HCI insights and can further benefit by being cognizant of these interplays.

Often in behavioral studies, there is a focus on humans as the limitation in a more efficient outcome. However, where there are weaknesses, there are also strengths that are important to recognize and utilize. In fact, technological innovations often are simply trying to mirror how humans naturally try to interpret data. Some companies have stood out as being more proactively focused on their interaction with technology. Lemonade is one such P&C company that has emerged, offering instant home and renters’ insurance. Protective has some life insurance offerings alongside student loan consolidations. Life insurers as a whole have moved toward simplified issuing. Several home insurance companies have adapted products to the short-term rental market. Here at Voya, we recently leveraged artificial intelligence (AI) technology to help customers with visual impairments verbally navigate through their accounts.

Driving the Future of Our Interactions

While some companies have stepped up to try to meet the expectations technological innovation has created, the insurance industry still has plenty of opportunity to utilize HCI and BE concepts when thinking about everything from distribution to pricing and product features. The products are often still complex, siloed, illiquid and not easy to value or purchase. Websites such as Mint and Wealthfront are able to integrate real-time estimations on portfolios, homes and cars, but they are unable to put together a rough estimate on a life insurance policy. We are able to purchase and sell $25 of someone’s debt through LendingClub or Prosper, but we are unable to purchase $25 increments of life insurance as easily. Robo-advisors can display investment projections of funds and the fees associated, but they are unable to layer in annuities. In the near future, we will surely see attempts to solve these shortcomings; actuaries need to decide if insurance companies will be the ones providing these solutions.

Autonomous driving, peer marketplaces, crowd sourcing, AI, cryptocurrencies, virtual reality, robo-advisers and countless other emerging technologies will have consequences for the insurance industry. Each of these technologies will also allow for a different form of HCI, whether it is an internal analytical tool or an avenue for sales. Actuaries need to decide how much of this interaction will be consciously interpreted, or if the industry will continue to be pulled along in order to survive.

References:

- 1. Skinner, B. F. Superstition in the Pigeon, Journal of Experimental Psychology. ↩

Copyright © 2019 by the Society of Actuaries, Chicago, Illinois.